Context and stakes: Why this mattered

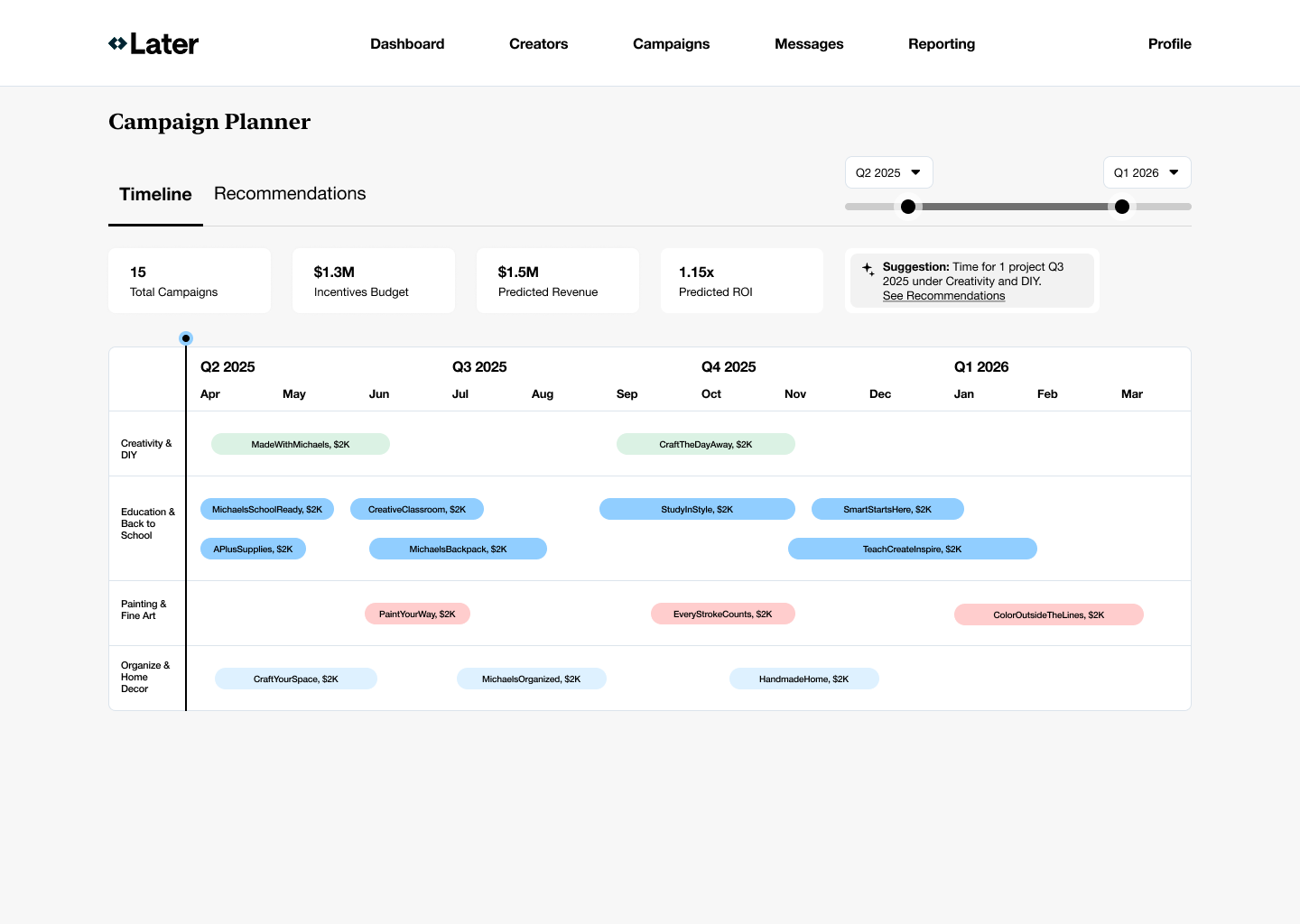

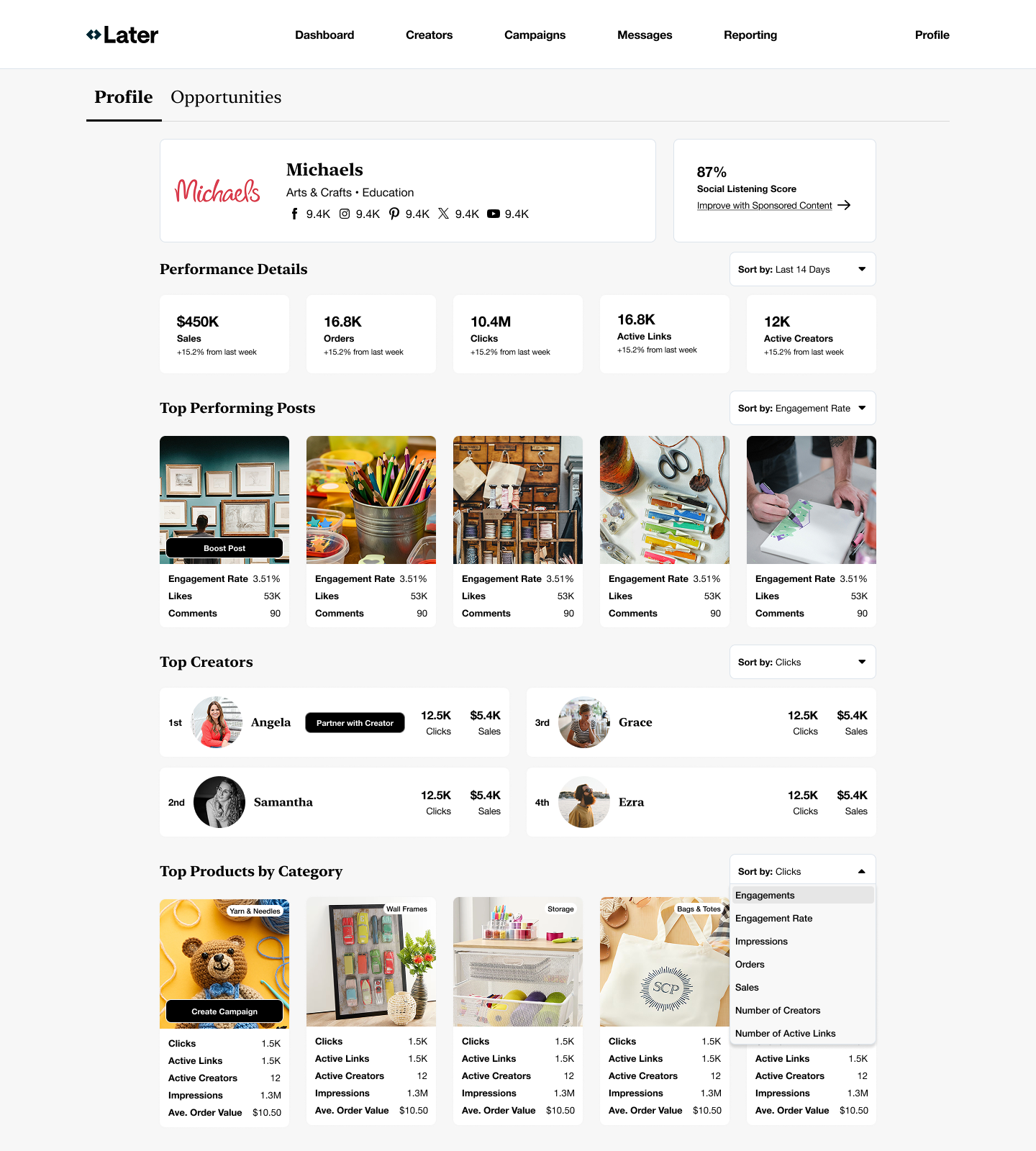

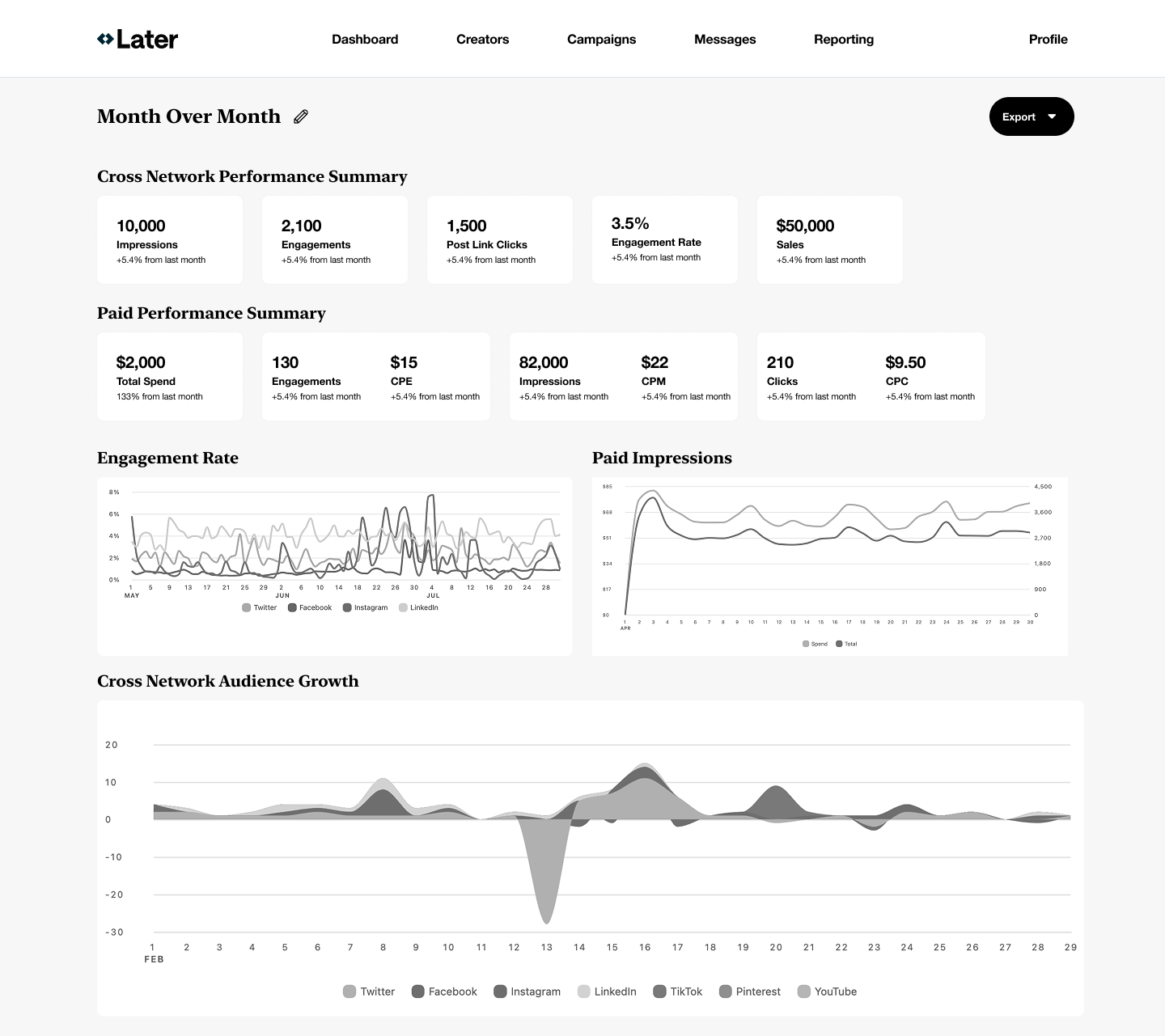

Later had recently acquired Mavely, bringing together two products that served overlapping but differently framed marketer workflows. While both products were successful in isolation, they lacked a shared mental model of how marketers should move from discovery → execution → measurement.

At the same time:

- Leadership needed a clear direction for future investment

- Teams were exploring AI opportunistically, without a unifying experience strategy

- There was a real risk of building disconnected features that increased complexity rather than reduced it

The stakes were not visual consistency, they were strategic system alignment and trust.

Ownership and action: What I took on

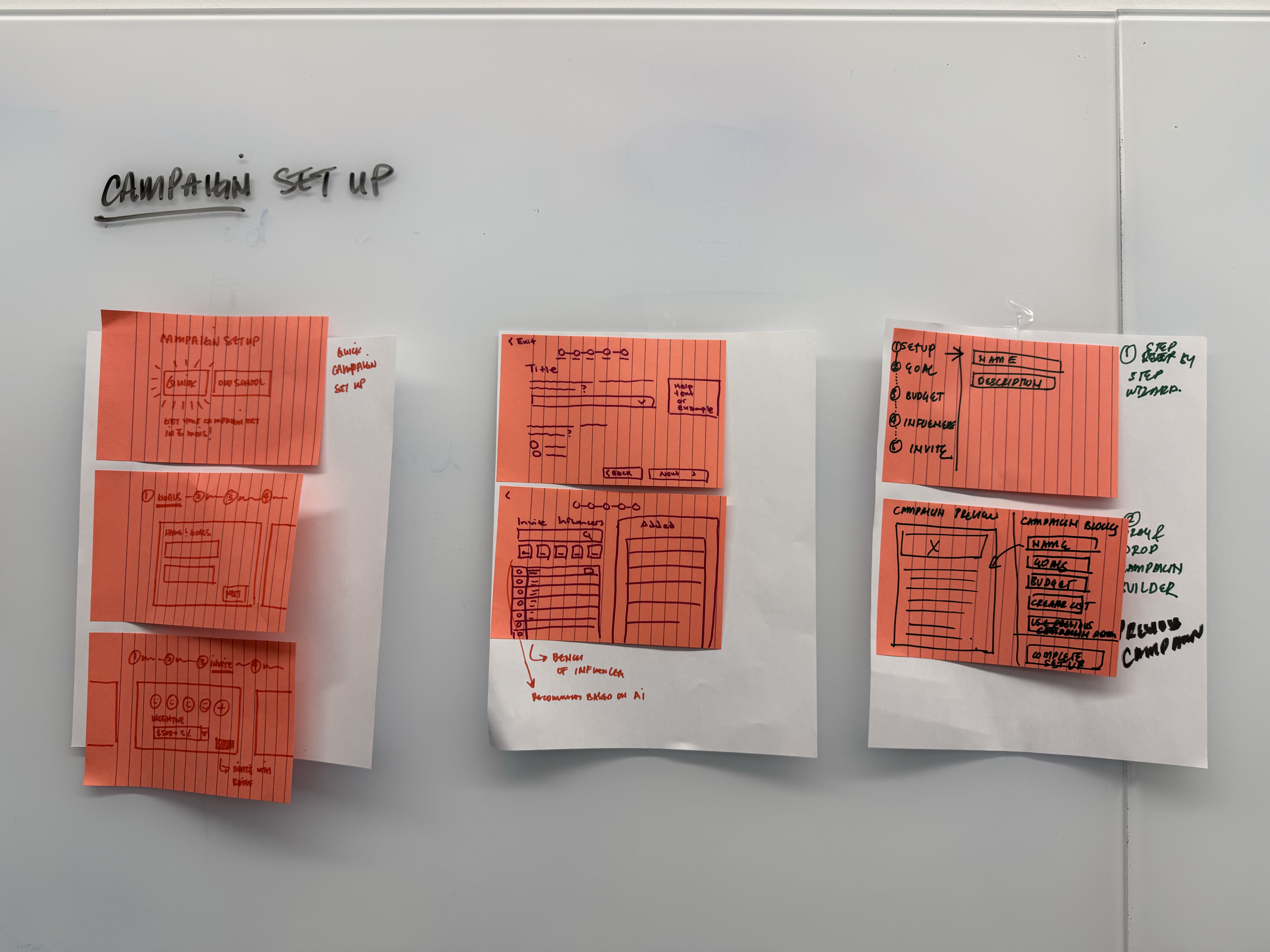

I was asked to lead this work under a 7-day timeline ahead of an executive board meeting.

My responsibility was not to design production-ready UX, but to:

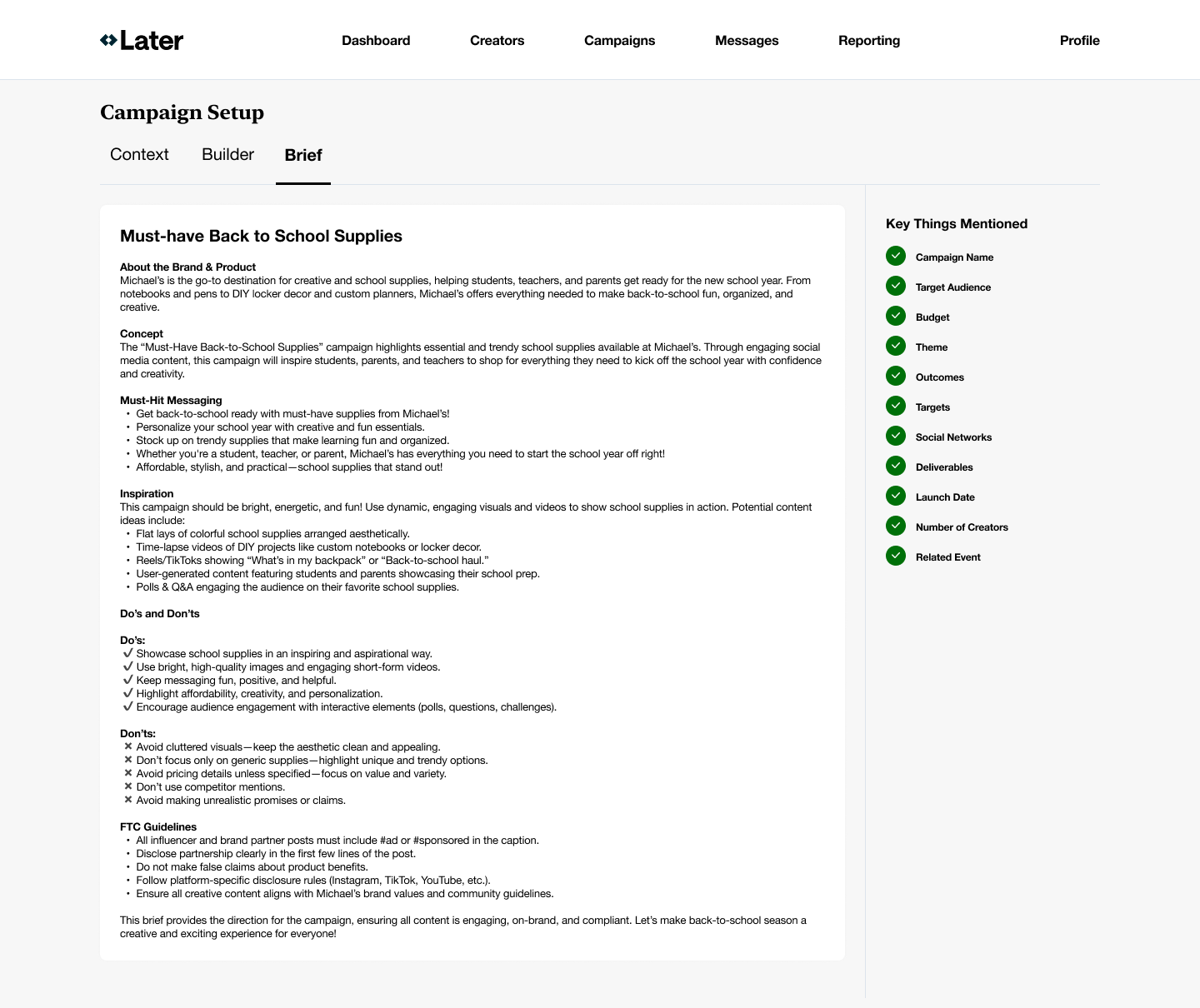

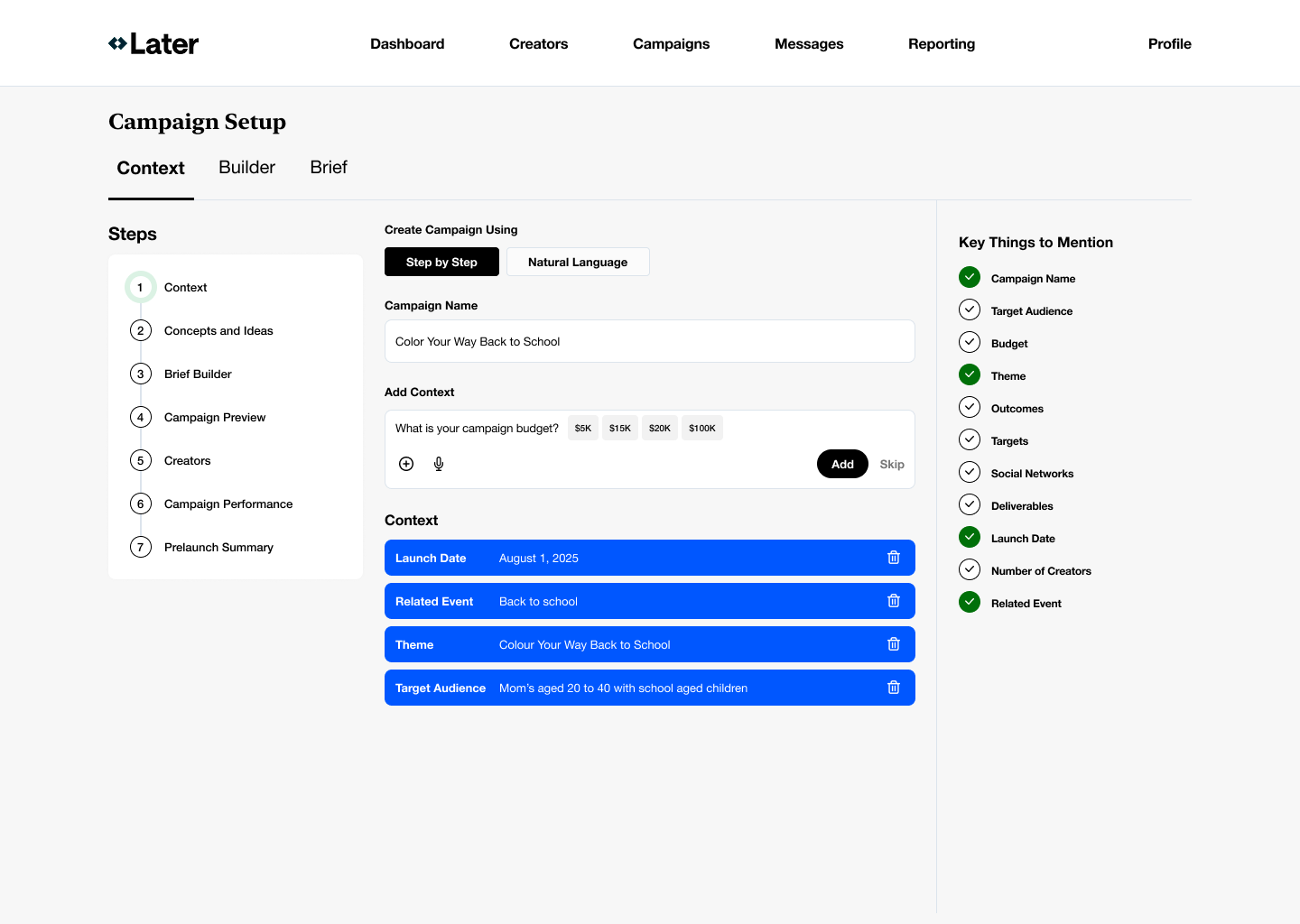

- Translate executive strategy into a coherent experience model

- Absorb ambiguity so teams wouldn’t prematurely lock into solutions

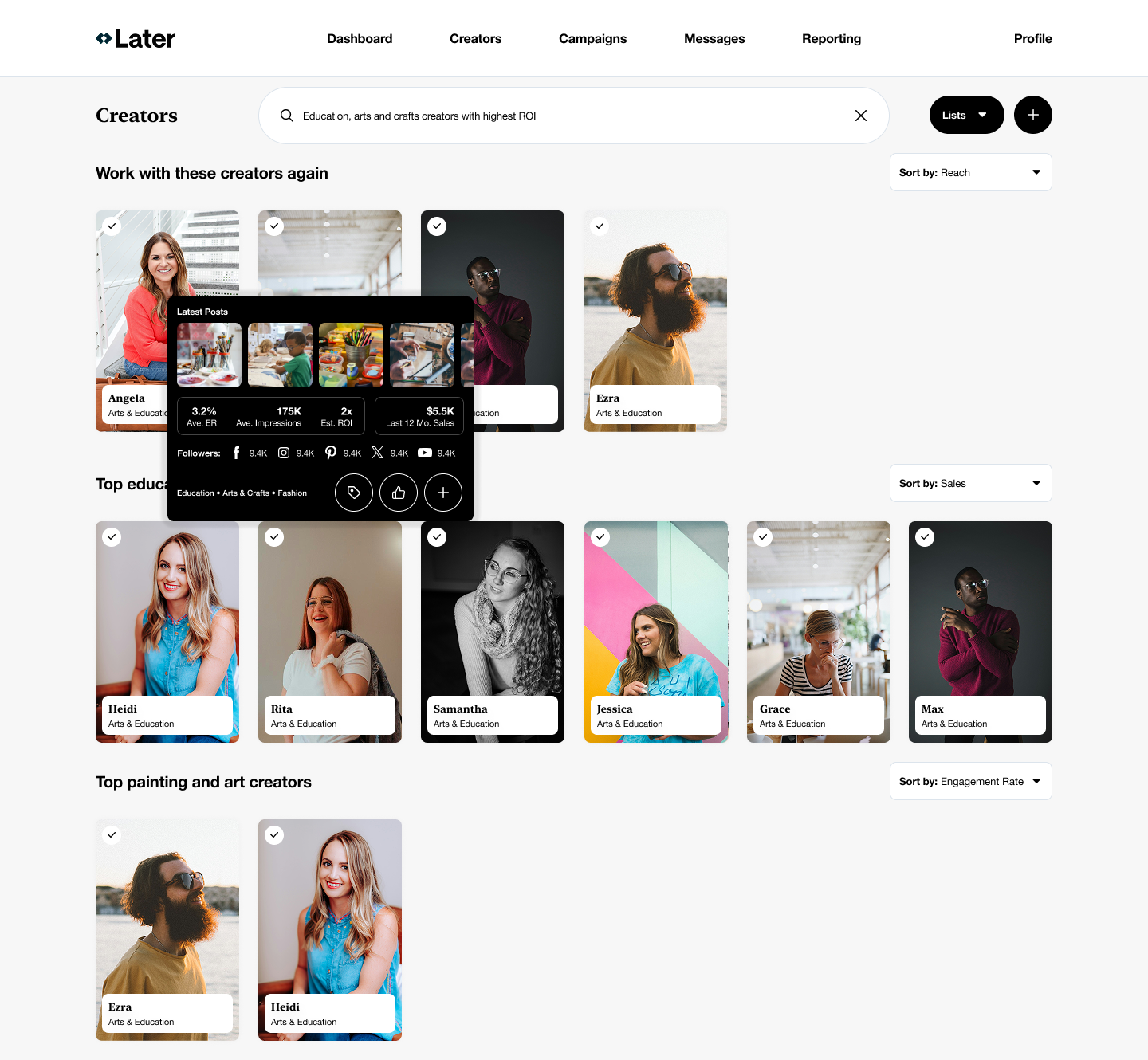

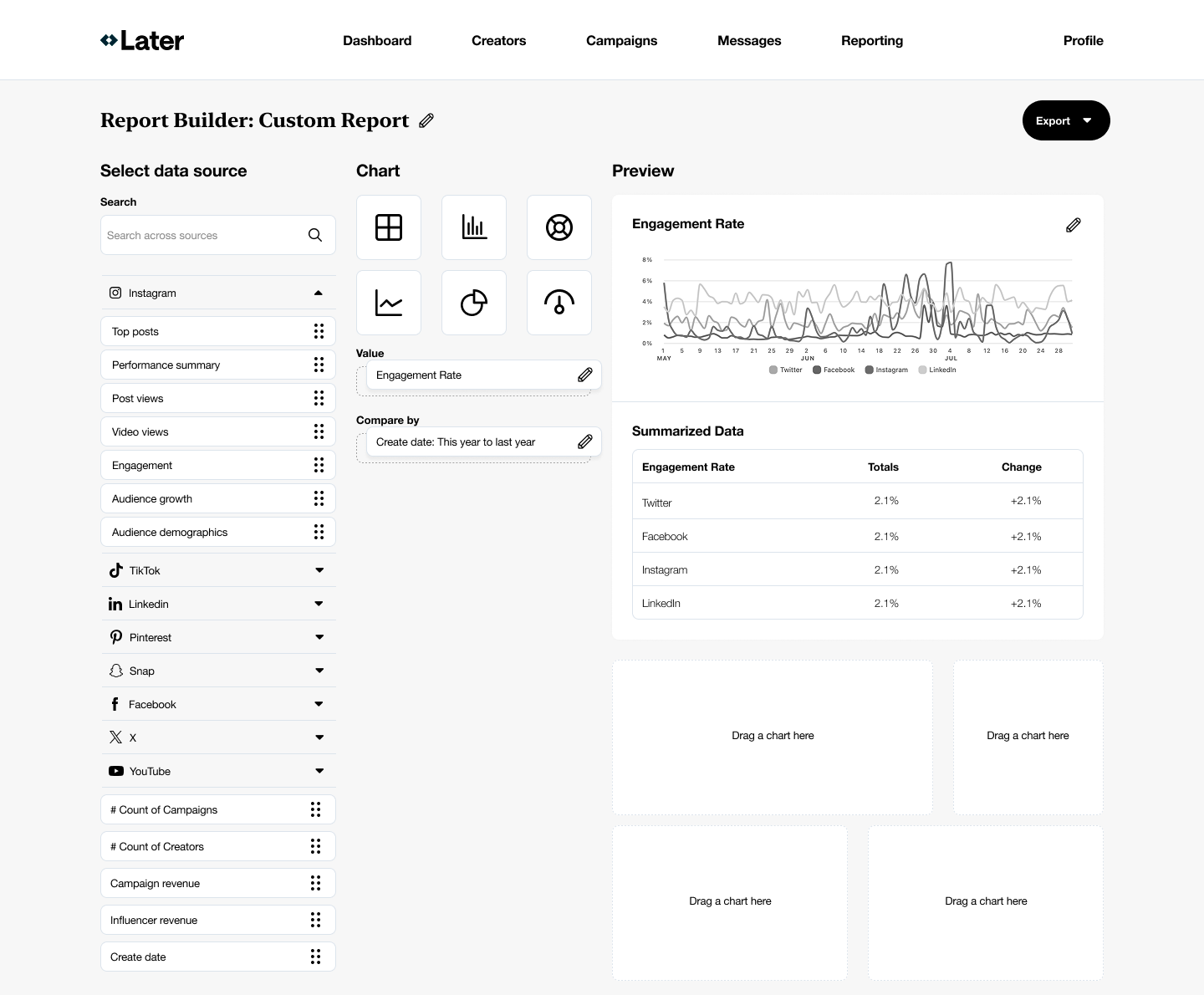

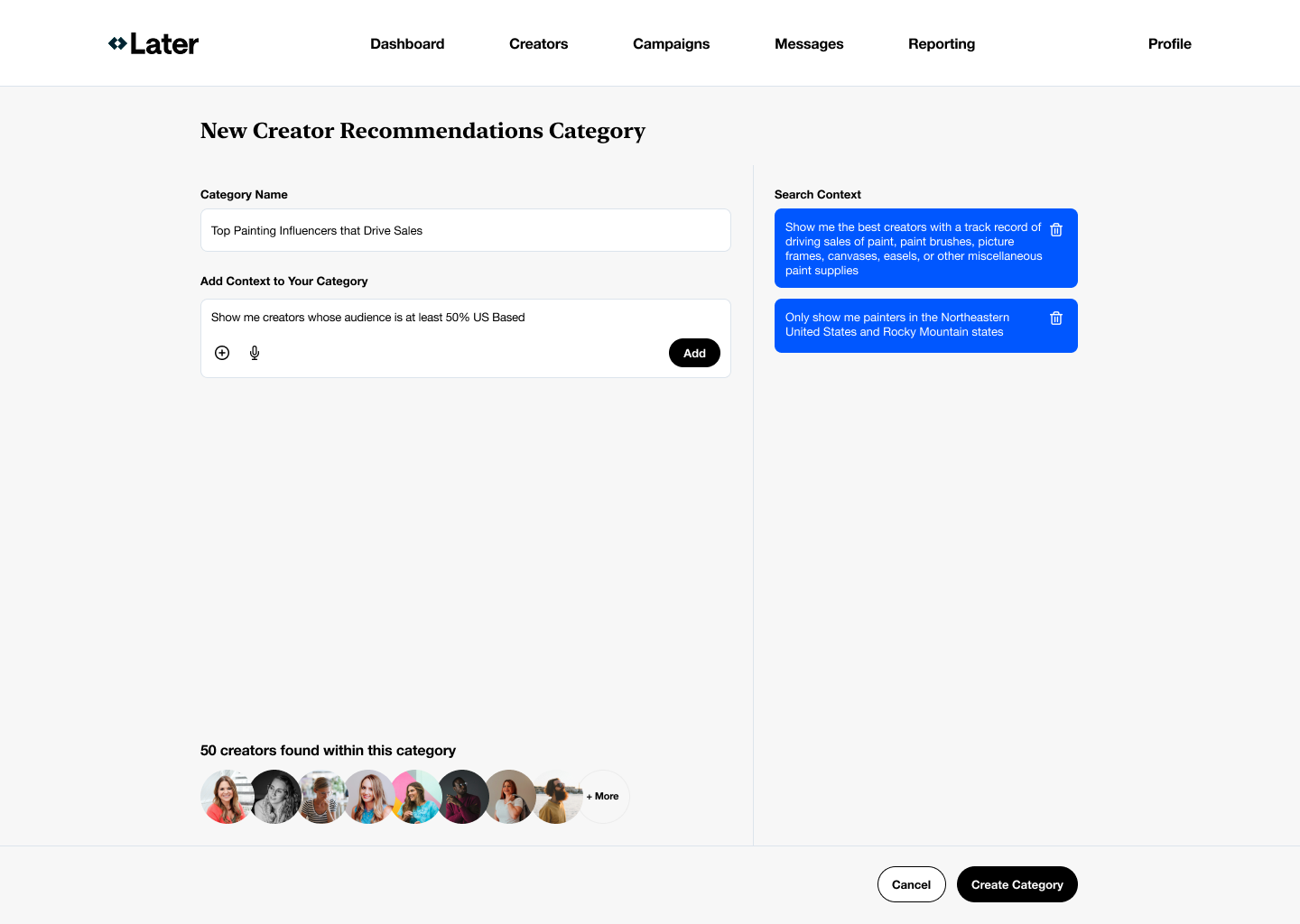

- Define where AI meaningfully improves efficiency and reduces cognitive load

I owned:

- The end-to-end experience vision

- The underlying user mental model

- The articulation of clear design bets, teams could align around

Strategic framing: The real design problem

The real problem wasn’t “how do we add AI?” It was:

How do we help marketers understand, trust, and navigate a complex ecosystem of data, creators, campaigns, and outcomes?

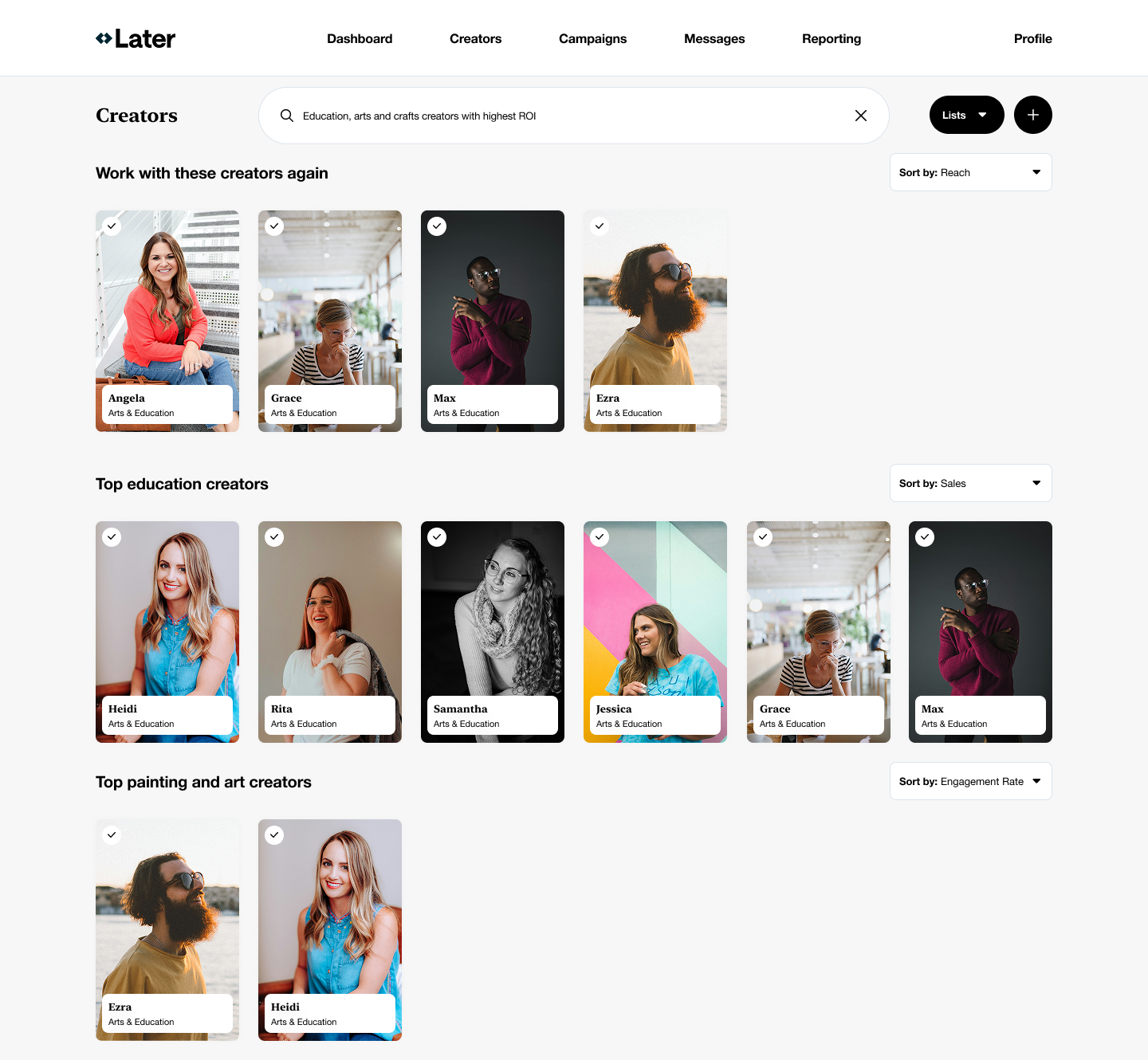

Marketers struggled with:

- Inefficient, fragmented workflows

- Difficulty predicting creator ROI

- Cognitive overload when managing their programs scale

Research and mental model construction

Given the constraints, I focused on rapid synthesis rather than net-new research. I:

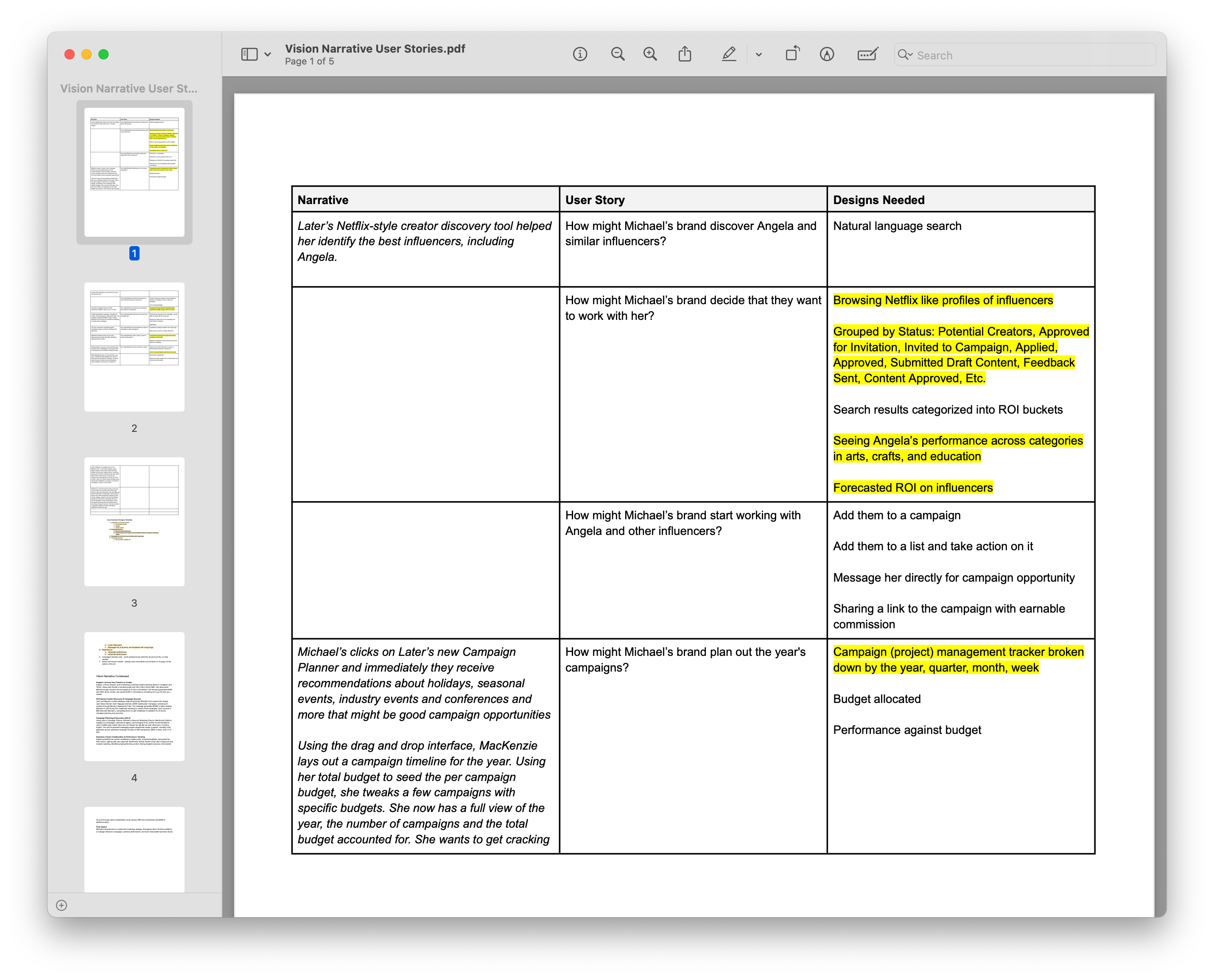

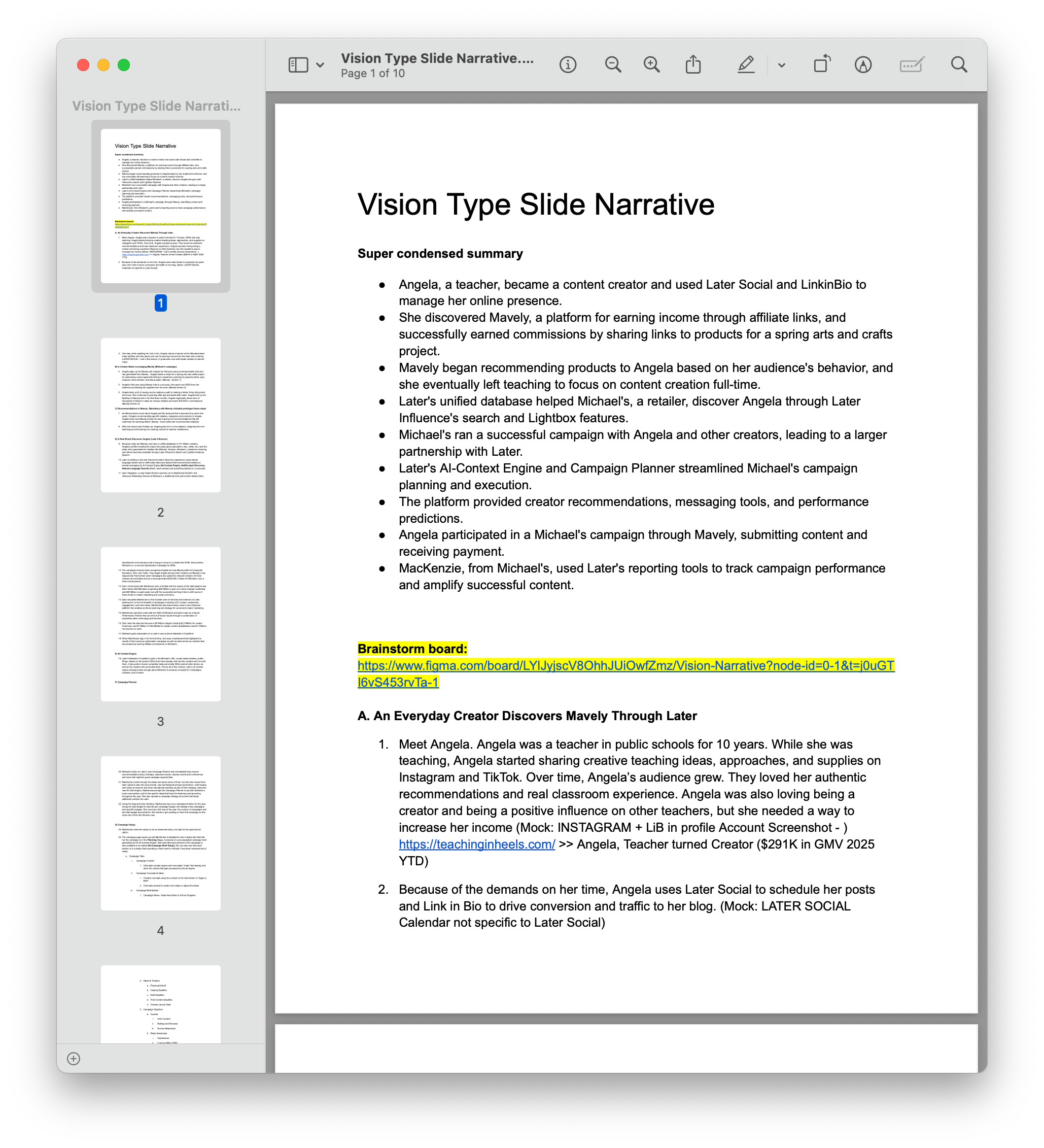

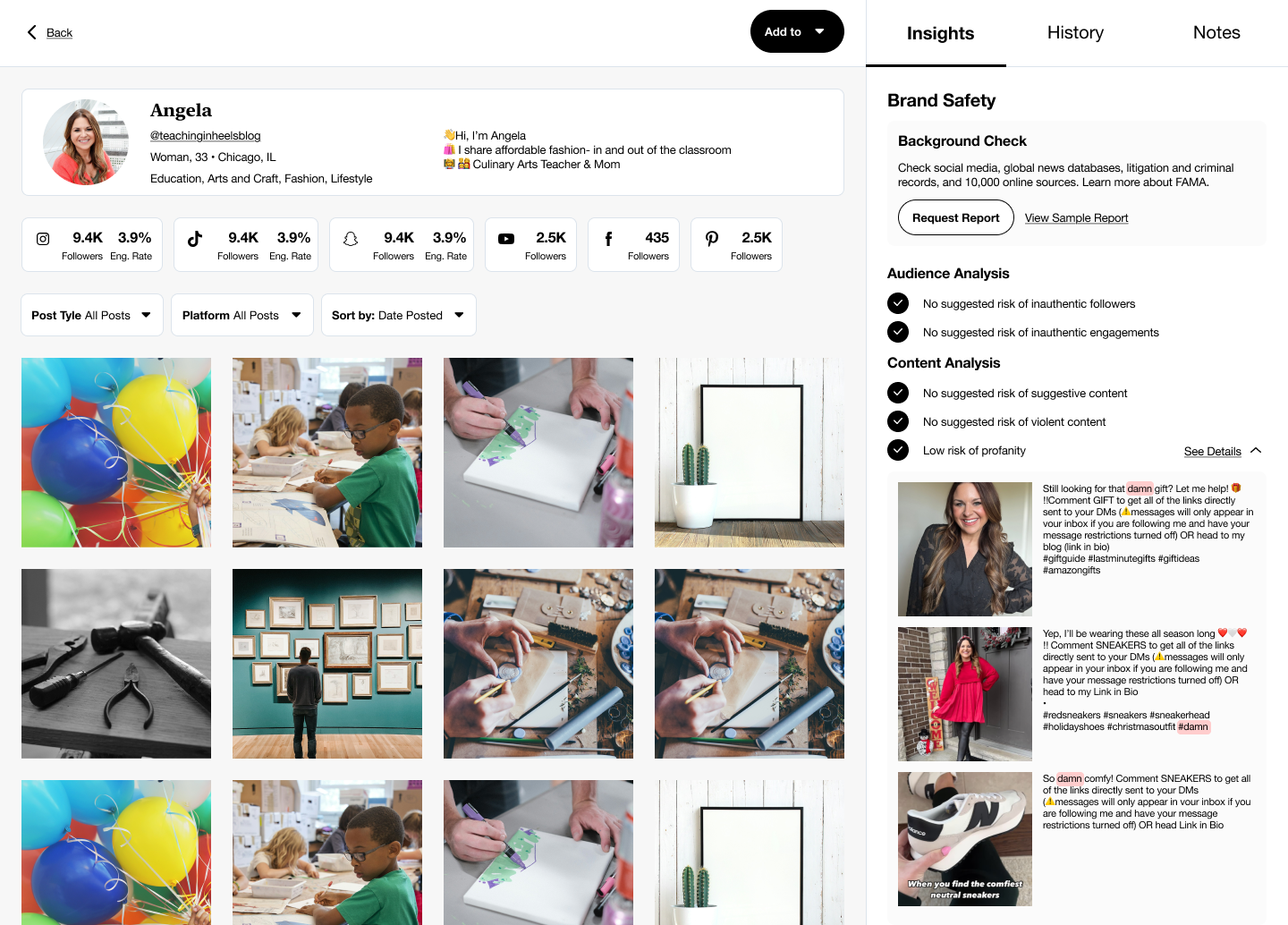

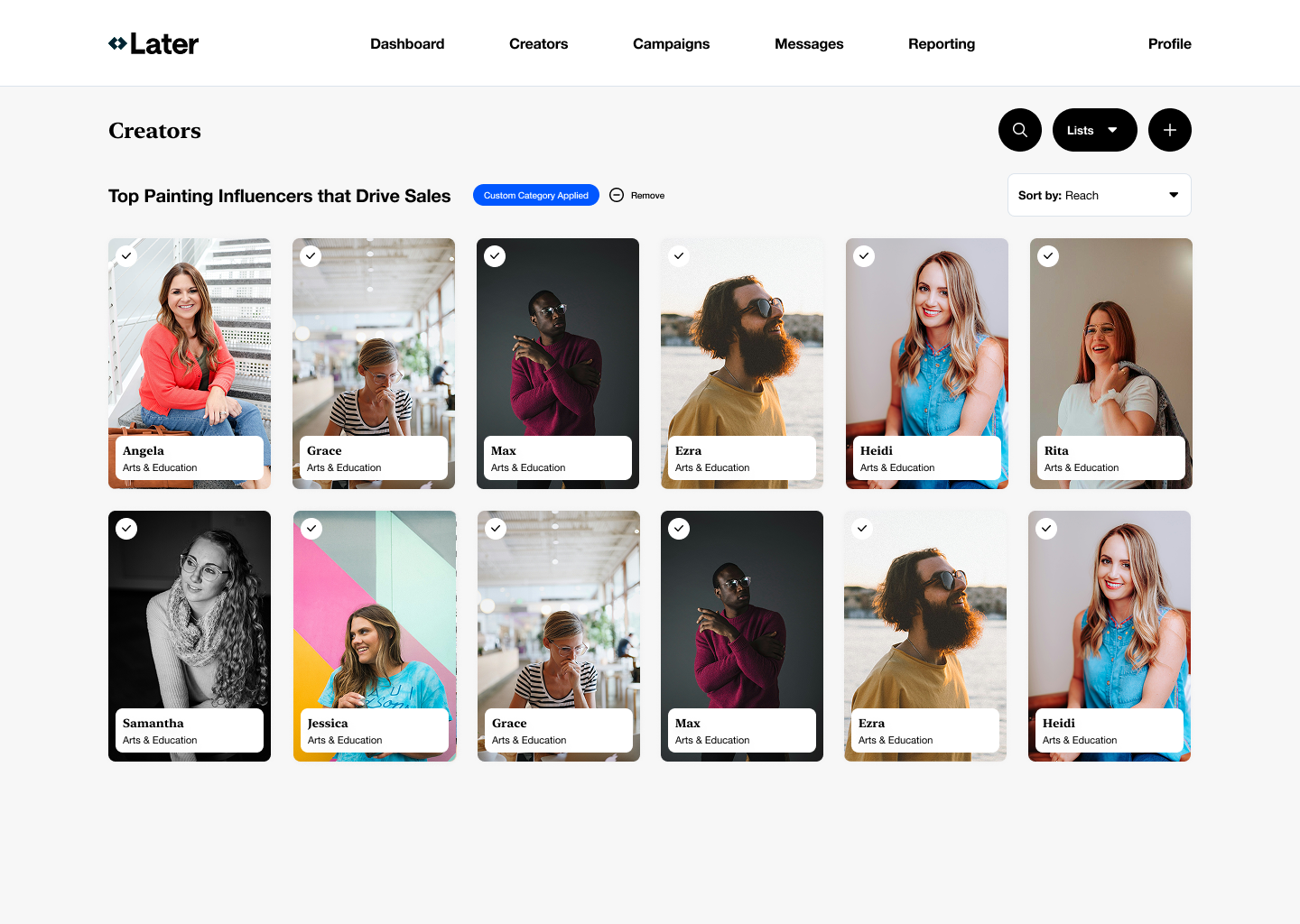

- Anchored the vision in two core personas: marketers and creators

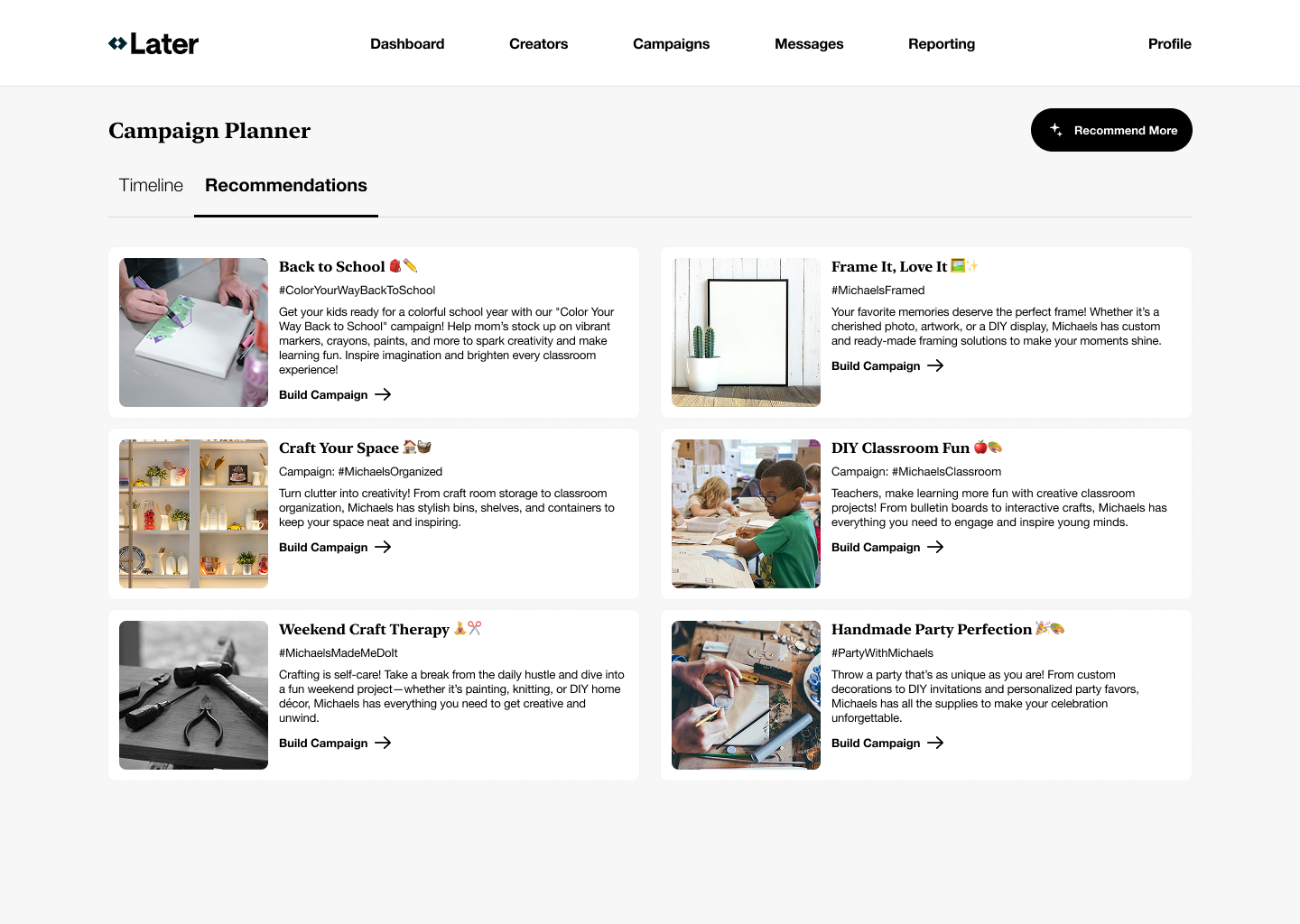

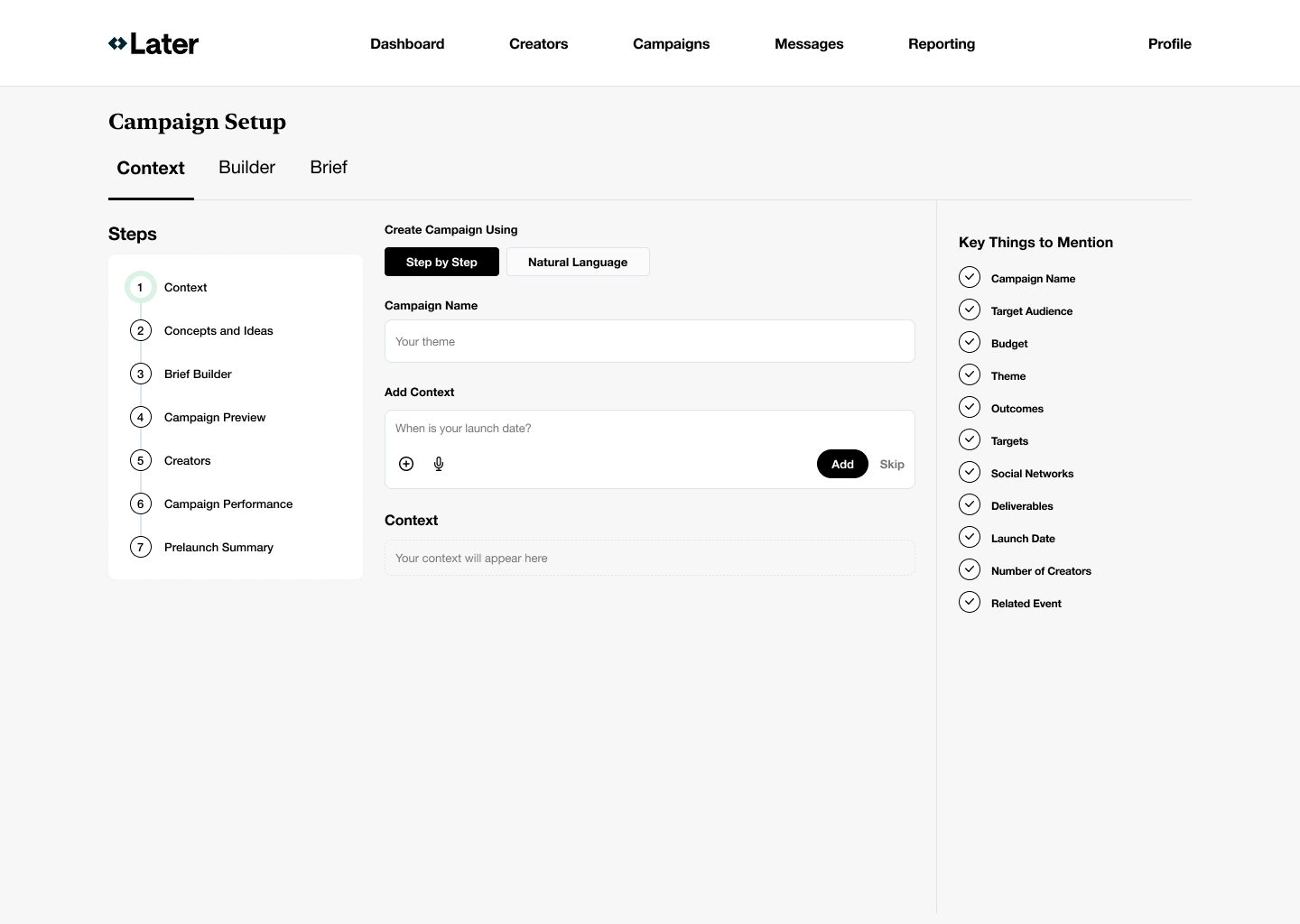

- Mapped the marketer journey as a decision flow, not a feature list

- Studied adjacent systems (ChatGPT, Netflix, HubSpot) for how they progressively reveal complexity

Strategic decisions and tradeoffs

Decisions I made:

- Prioritized predictability and guidance over infinite flexibility

- Treated AI as a supporting actor, not the main interface

- Designed the system to surface confidence when making key selections

Tradeoffs I accepted:

- Chose mid-fidelity, vision-level artifacts to avoid anchoring on UI

- Limited scope to a “north star” rather than a roadmap

- Deferred technical feasibility questions to keep the focus on experience intent

Outcomes and leverage: What changed

Organizational outcomes:

- Secured executive and board alignment around a unified product direction

- Created a shared reference model teams could use to evaluate future AI ideas

- Reduced risk of fragmented, one-off AI features

Design and product outcomes:

- Aligned two design organizations around a common experience philosophy

- Established experience principles that informed downstream roadmap work

- Built momentum and confidence for a longer-term platform strategy

This aligned how to think and approach our unified vision.

Reflection

This project reinforced a principle I now apply consistently:

Complex systems feel simple only when their structure is deliberately designed. AI, like any system feature, amplifies poor structure if left unchecked.

Today, I bring this mindset to:

- Information architecture

- Configuration-heavy experiences

- Platform-level UX decisions

- Agentic systems where users are informed before the system executes